Founded by Varun Agarwal

Foundation models are powerful but volatile. They hallucinate, drift, and produce brittle outputs that block scientific and engineering workflows.

The standard fixes are expensive, slow, and often destabilizing. They don't scale to the settings that matter most: multi-property, multi-scale tasks like generating new materials or optimizing genetic medicines, where verification itself is difficult, ambiguous, or costly.

Teams end up trapped in "guess and check" loops — iterating on data and retraining instead of reasoning directly about model behavior. Persistent failure modes go undetected, and creative opportunities inside models go unexploited.

Envariant moves verification upstream — from the lab directly into the model's latent space. Their SDK exposes a compact set of primitives so you can specify a property and then reliably observe and control it:

No bespoke engineering, heavy orchestration, or massive dataset requirements.

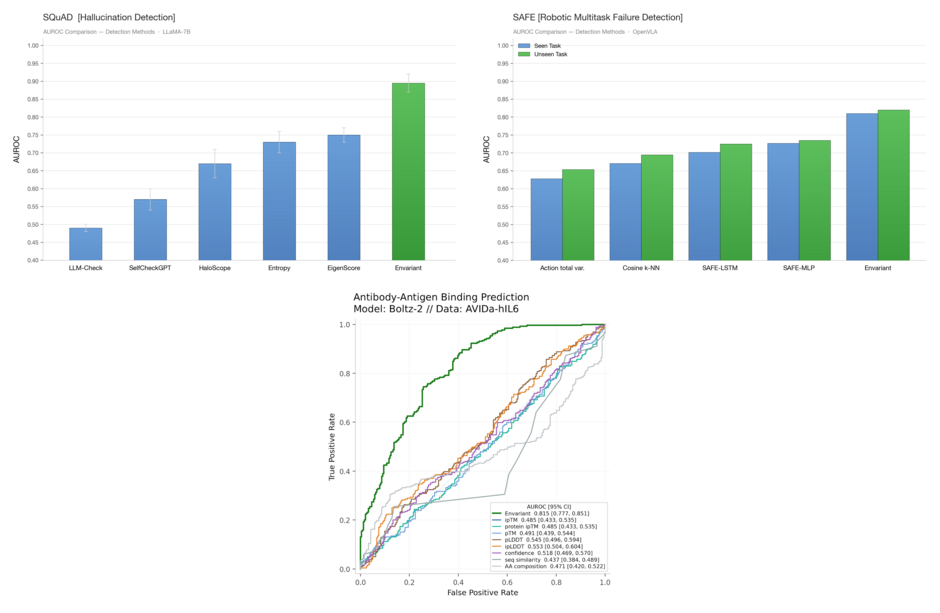

They started with failure mode detection: using one underlying primitive, they have reached SOTA performance in hallucination detection in text LLMs, real-time degradation detection in robotic VLAs, and antibody-binding prediction. They are releasing a beta of this for testing on March 3rd!

They will be scaling up their failure mode detection, expanding this to general property detection and measurement, and releasing experiments & SOTA results across inductive reasoning, steering, and principle extraction this week. Long term, they want to remove the bottlenecks to progress by turning mechanistic understanding into actionable capabilities — enabling teams to reliably control behavior and accelerate discovery.